Lecture 9

Presenter

- Name: Natan Vidra

- Topic: Human-Centered AI and Building Practical AI Systems

- Description: In the final lecture of the AI Academy series, Natan Vidra presents an overview of Human-Centered AI and how it informs the design of practical, reliable AI systems. The talk focuses on the challenge of bridging the gap between powerful AI models and real-world applications. Natan explains how raw models alone are not sufficient for production systems, and how human expertise, structured data, evaluation, and workflow design must be integrated into the development process.

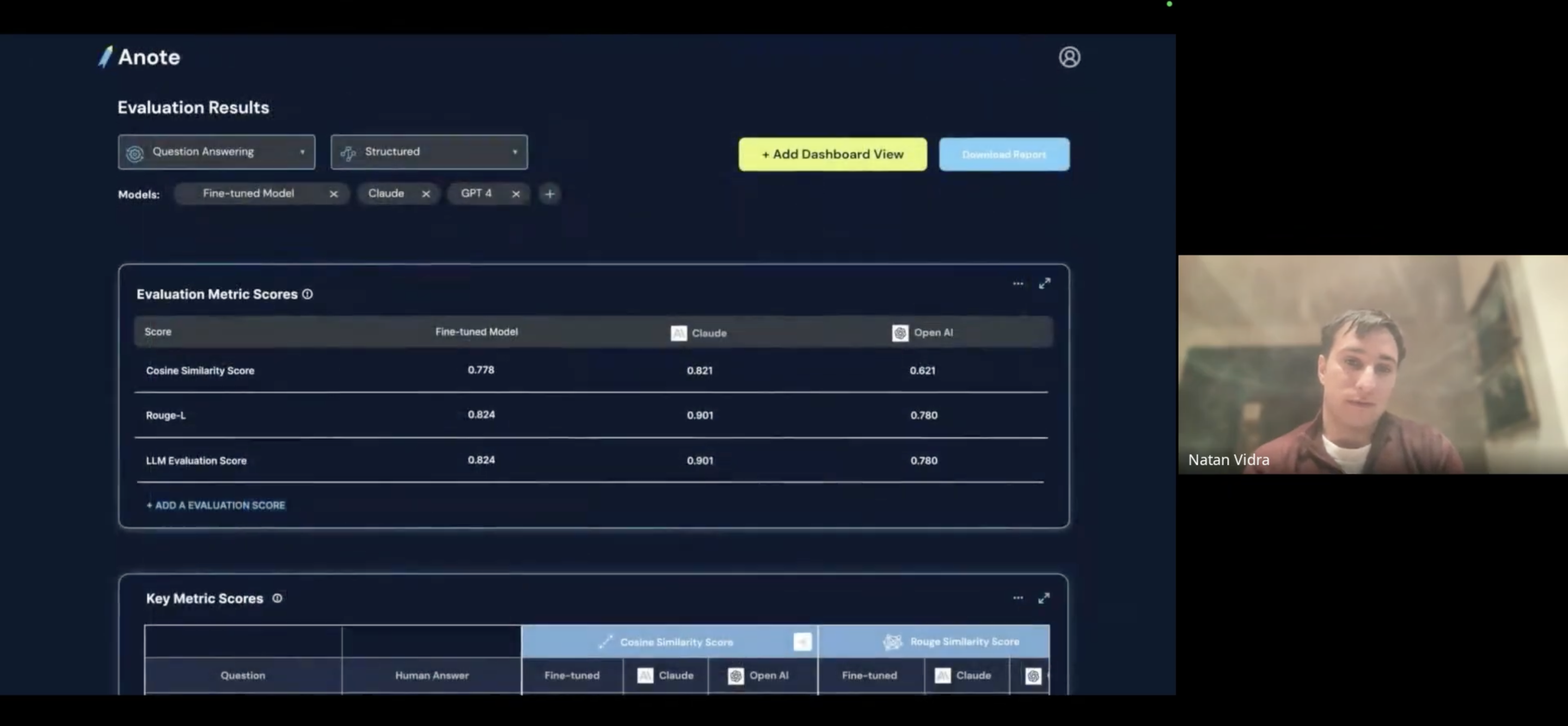

The lecture introduces Anote’s core platform as an example of a Human-Centered AI MLOps system that helps organizations transform unstructured data into high-quality training and evaluation datasets. Natan discusses how human-in-the-loop annotation, evaluation frameworks, and domain expertise can significantly improve model reliability, enabling models to perform tasks such as classification, information extraction, and question answering on domain-specific data.

Natan also reviews several systems built within the Anote ecosystem that demonstrate how these ideas translate into real products. These include the open-source Autonomous Intelligence framework for multi-agent systems, the Model Leaderboard used to evaluate and benchmark models on real tasks, and the Synthetic Data API for generating datasets used to train and evaluate models. Together, these tools illustrate how AI infrastructure, evaluation, and data generation can work together to enable robust and practical AI deployments.

The lecture concludes with a discussion about future opportunities to contribute to these efforts, including research collaboration, internships, and participation in ongoing projects building AI systems that combine strong models with human expertise.

Recording

Key Takeaways

- Human-Centered AI integrates human expertise into the AI lifecycle to improve model reliability, evaluation, and performance in real-world settings.

- Building practical AI systems requires more than powerful models; it requires high-quality data, evaluation frameworks, and well-designed workflows.

- Tools such as multi-agent systems, model benchmarking platforms, and synthetic data generation can help bridge the gap between cutting-edge AI research and real-world applications.